Common Errors in Gendered AI Translation

AI translation often struggles with gender-specific grammar, leading to errors that misrepresent speakers and confuse audiences. For example, in Hebrew - where 80% of verbs are gendered - AI tools frequently default to masculine forms, even when the speaker is female. These mistakes can undermine trust, create awkward interactions, and even cause business losses.

Key Issues:

- Defaulting to Male Forms: AI often uses masculine defaults, like translating "I am going" into Hebrew as "ani holech" (masculine) instead of "ani holechet" (feminine).

- Gender Stereotyping in Job Titles: Professions like "doctor" and "nurse" are often inaccurately translated based on outdated stereotypes.

- Mishandling Gender-Neutral Hebrew Translation: English phrases like "I am a teacher" often lose context when translated into gendered languages, leading to errors.

Why It Happens:

- Biased Training Data: AI models are trained on datasets that overrepresent masculine forms.

- Lack of Context Awareness: Translation systems rely on statistical probabilities, missing speaker or audience cues.

Solutions:

- Human Review: Professional linguists ensure translations align with intended meanings.

- Gender-Sensitive Tools: Advanced systems like baba account for gender nuances, achieving over 95% accuracy in Hebrew.

- User-Specified Gender Options: Allowing users to define gender context improves translation accuracy.

Accurate gendered translations matter for clear communication, trust, and professionalism. Tools designed for gender-sensitive languages, combined with human expertise, can reduce errors and improve interactions.

Common Gender Translation Errors

AI Gender Translation Error Rates in Hebrew and Gendered Languages

These errors distort communication by misrepresenting both the speaker and the intended audience, often leading to confusion and reinforcing stereotypes.

Defaulting to Male Forms

One of the most frequent issues is the automatic use of masculine defaults. For instance, typing "I am going" into a typical translation tool produces "ani holech" (masculine) in Hebrew, regardless of whether the speaker is male or female. The correct feminine form, "ani holechet", is often ignored. This happens because AI systems are trained on datasets that lean heavily toward male-centric examples.

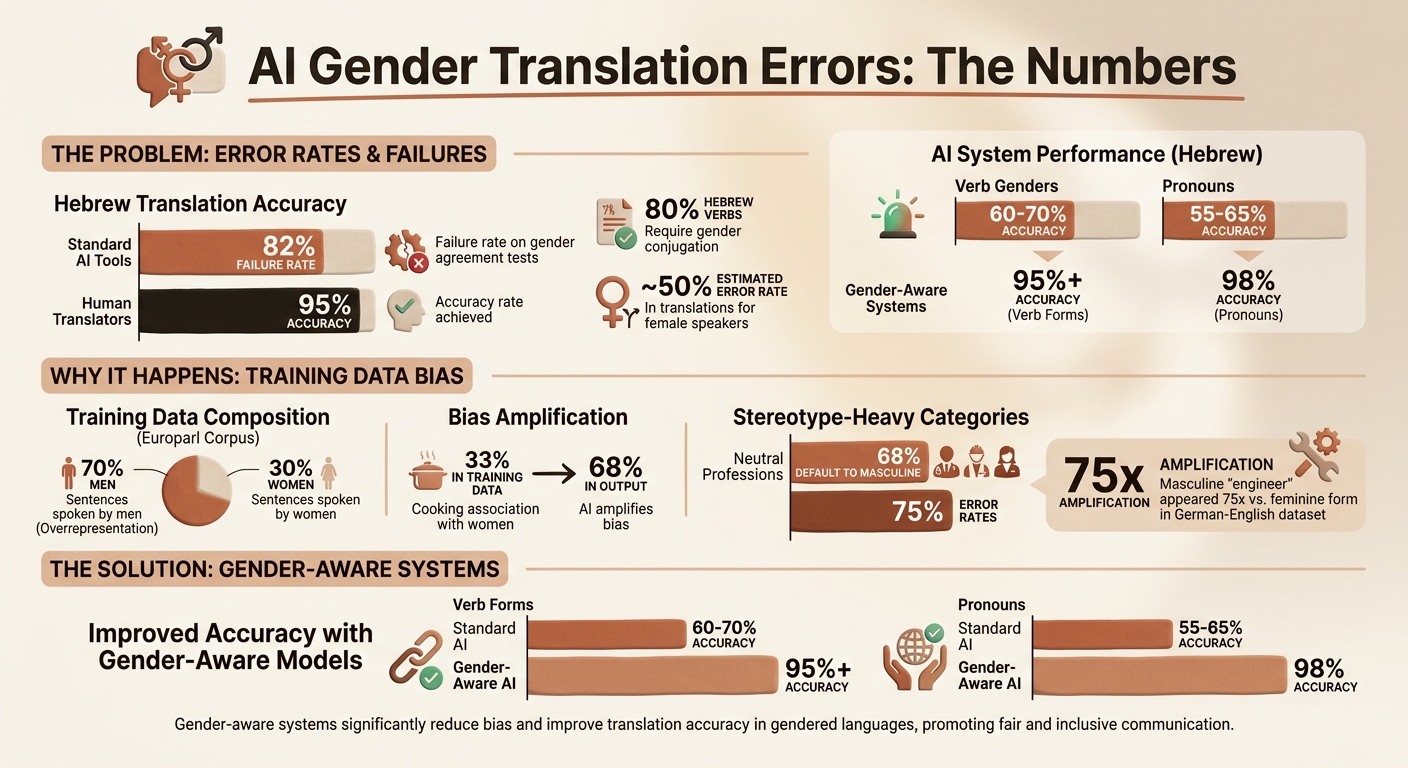

In March 2026, baba Technology highlighted this problem when a female voice saying "I love you" was translated as the masculine "Ani ohev otcha" instead of the feminine "Ani ohevet otcha." A 2024 study from Tel Aviv University revealed that standard translation tools fail 82% of gender agreement tests in Hebrew, compared to human translators who achieve 95% accuracy. Considering that 80% of Hebrew verbs require gender conjugation, this leads to errors in nearly half of translations for female speakers.

But the problem doesn’t stop at verb forms. AI systems also perpetuate gender stereotypes, compounding the issue.

Gender Stereotyping in Job Titles

Translation tools often assign gender to job titles based on outdated stereotypes. For example, translating "doctor" from English to Spanish results in "el doctor" (masculine), while "nurse" becomes "la enfermera" (feminine). Despite being gender-neutral in English, these roles are skewed by the AI’s assumptions.

A 2023 study by Stanford found that 68% of AI translations for neutral professions like "engineer" or "teacher" defaulted to masculine forms in gendered languages. In stereotype-heavy categories, error rates reached as high as 75%. For instance, in French, "engineer" is translated as "ingénieur" (masculine), reinforcing biases embedded in training data. These stereotypical translations highlight the urgent need for cultural context in Hebrew AI translations to ensure accuracy.

Mishandling Gender-Neutral Source Languages

English, which doesn’t mark gender on verbs or adjectives, poses a unique challenge when translated into languages like Hebrew. Take the phrase "I am a teacher" - without gender cues, AI systems default to the masculine "ani moreh" instead of the feminine "ani mora", even if the speaker is female.

In a 2025 business scenario, an English-to-Hebrew email translated "I will attend the meeting" as the masculine "ani agie", leading to a female executive being misgendered in front of Israeli clients. Similarly, in Turkish, the gender-neutral "doktor" is often translated into Hebrew as "rofe" (masculine) instead of the appropriate feminine "rofa", depending on the speaker. These missteps not only cause miscommunication but also undermine trust in professional and personal interactions where gender accuracy is critical.

sbb-itb-7e51dcc

Why These Errors Happen

To understand why these translation errors persist, we need to examine their root causes. They primarily arise from two factors: the biases in training data and the systems' inability to process contextual cues as humans do.

Biased Training Data

AI translation models are trained on massive datasets sourced from the internet, books, and public documents. Unfortunately, these datasets often reflect historical gender biases. For example, the Europarl corpus - a widely used training dataset - shows a stark imbalance, with only 30% of sentences spoken by women. This overrepresentation of masculine forms causes AI systems to default to them.

"Automated translation engines amplify the biases of their training data sets."

- Dr. Nicolas Kayser-Bril, AlgorithmWatch

The impact of this amplification is significant. A 2017 study revealed that when "cooking" was associated 33% more frequently with women in the training data, the translation engine exaggerated this association to 68%. Similarly, in a German–English dataset, the masculine form of "engineer" appeared 75 times more often than the feminine form. In languages like Hebrew, where gender conjugation affects about 80% of verbs, this bias can result in incorrect translations nearly half the time when the speaker is female.

The problem is compounded because standard models lack the parameters needed to account for factors like the speaker's gender, the listener's gender, or the group's composition. Even with better training data, these models struggle to overcome their reliance on statistical patterns.

Missing Context in AI Processing

Another major challenge lies in how translation systems process text. Most systems lack the ability to clearly identify the speaker or the audience, forcing them to rely on statistical probabilities rather than nuanced human context.

A common approach in AI translation involves using English as an intermediary "pivot" language. Since English has fewer gender-specific markers - terms like "teacher" or "doctor" are neutral - critical gender cues from the original language can be lost. When translating into a highly gendered language like Hebrew, the system often guesses the gender based on statistical likelihood rather than context.

"Machine translation seems prone to exploit statistical biases in its training data rather than rely on more meaningful context cues."

- Gabriel Stanovsky, Researcher

This reliance on statistical patterns explains why many translation systems achieve only 60–70% accuracy for verb genders and 55–65% accuracy for pronouns in Hebrew. Without explicit controls for factors like the speaker's gender or the group composition, algorithms tend to favor the most statistically probable output - often masculine - resulting in translations that sound awkward or unnatural to native speakers.

How to Fix Gender Translation Errors

Addressing gender translation errors involves a mix of human expertise, advanced tools designed for gender-sensitive languages, and giving users clear options to specify gender context. These errors often arise from biased datasets and a lack of contextual understanding, but targeted strategies can help resolve them.

Human Review and Correction

Professional linguists play a crucial role in spotting and fixing errors that AI systems might overlook[2][3]. A thorough, multi-step review process ensures that every gender-marked word aligns with both the speaker's intent and the audience's expectations. This is especially critical in languages like Hebrew, where gender impacts around 80% of verbs and can involve over 11 variations depending on who is speaking and to whom[2]. Feeding these corrections back into the AI's training process creates a feedback loop that reduces the likelihood of repeated mistakes in the future[4].

Gender-Aware Translation Tools

Specialized tools designed for gender-complex languages are another powerful solution. For instance, tools like baba use 22 customized AI prompts and account for 11 gender-aware variations to deliver accurate translations. These systems analyze factors like the speaker, audience, and context to choose the correct gender forms. The results are impressive, with gender-aware systems achieving over 95% accuracy for verb forms and 98% for pronouns[1]. Beyond accuracy, these tools also handle modern slang while respecting cultural nuances[2].

Offering Multiple Gender Options

Another effective approach is letting users specify gender context instead of defaulting to masculine forms. Leading translation platforms now provide clear options, such as "speaking to one woman", "speaking to one man", "speaking to a mixed group", or "speaking to mostly women." This ensures translations are tailored to the intended context[1][3]. For example, a woman drafting a business email can choose feminine verb forms, while someone addressing a group can specify whether it’s all-men, all-women, or mixed, ensuring accurate plural forms[2]. By making gender selection an explicit choice, these tools remove much of the ambiguity that often leads to translation errors.

Conclusion

Gender translation errors can throw a wrench into communication, create misunderstandings, and hurt professionalism when dealing with Hebrew. When AI defaults to masculine forms or overlooks gender nuances, the results often feel unnatural to native speakers. For Hebrew learners and professionals working with Israeli counterparts, these missteps can harm relationships and credibility.

Fixing these issues isn’t as simple as applying quick manual corrections. It requires a combination of AI vs. human translators designed specifically to handle Hebrew’s gender complexities. While professional linguists provide valuable insights, advanced translation tools step in to tackle these challenges on a broader scale. For example, baba uses 22 specialized AI prompts and 11 gender-aware variations to manage Hebrew’s intricate grammar - something generic translators just can’t match.

These details matter in real-life scenarios. A woman writing a business email needs feminine verb forms to maintain professionalism, and addressing a mixed group requires precise plural forms that reflect the audience. These nuances are essential for speaking natural, respectful Hebrew. By addressing these translation gaps, you enhance communication and build trust with your audience.

Whether you’re learning Hebrew or working in a professional setting, using gender-aware translation tools is a game-changer. Check out baba's gender-intelligent translation today - available on iOS and Android, with a 5.0-star rating from users who appreciate its deep understanding of Hebrew’s intricacies.

FAQs

How can I make a translation use feminine Hebrew forms?

To make sure your translation uses feminine Hebrew forms, it's important to specify the gender context when working with baba. The app is designed to automatically adjust verbs, adjectives, and pronouns based on the gender you choose.

You can activate the feminine setting directly in the app or include this preference in your prompt. baba’s AI is built to recognize and apply these gender-specific variations, creating translations that feel accurate and natural for feminine Hebrew.

What should I do when English has no gender clues but Hebrew requires them?

When translating from English to Hebrew, it's important to account for the fact that Hebrew doesn't include gender-neutral forms. This means verbs, adjectives, and pronouns all need to align with the correct gender. To get this right, consider both the speaker and the audience. If you can, clarify the gender context beforehand. Alternatively, you can use a tool like baba, which adjusts translations dynamically to reflect the intended gender, making the communication sound more natural and precise.

How can I tell if a Hebrew translation misgendered the speaker or audience?

To spot misgendering in a Hebrew translation, pay close attention to how verbs, adjectives, and pronouns align with the speaker's gender (male, female, or group) and the context of the audience. For instance, the phrase "I want to buy" should be translated as 'אני רוצה לקנות' for a male speaker and 'אני רוצה לקנות' for a female speaker. Leveraging a gender-aware tool can assist in ensuring these translations are accurate and contextually appropriate.